AI Generates Infinite Subjects.

Getting the Same Composition is Free.

The Visual Thinking Lens measures what CLIP, FID, and human evaluation miss: the geometry AI learns to repeat.

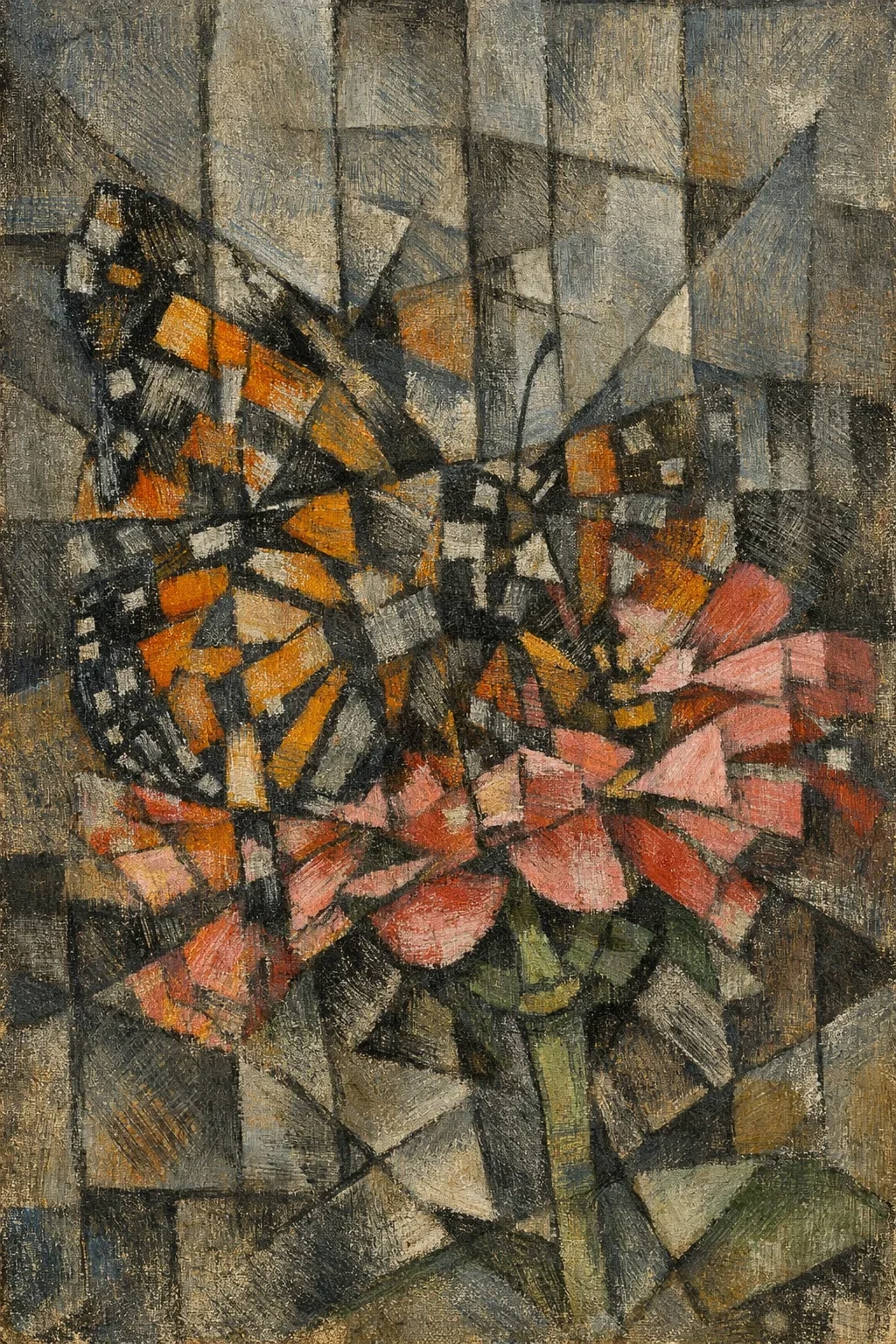

Text-to-image models can make anything. A butterfly. A city street. A building standing in the light. Neon boxes. Semantic infinity.

But they place everything the same way. Same offset from center. Same void ratio. Same radial envelope. Geometric poverty.

Most ‘prompt improvement’ is actually compensating for invisible spatial priors. Across 1,000+ images and all major platforms, semantic diversity explains less than 10% of spatial variance. Composition is model-driven, not prompt-driven

Current benchmarks measure if the butterfly looks like a butterfly. They don't measure if the butterfly could be anywhere else.

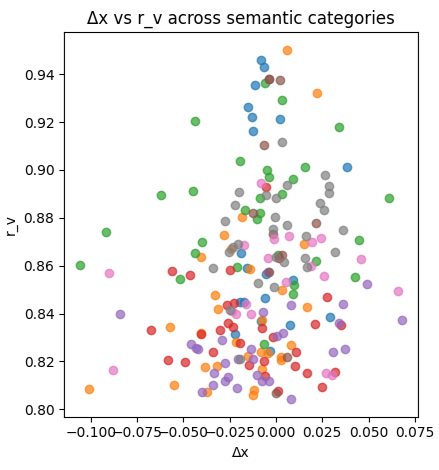

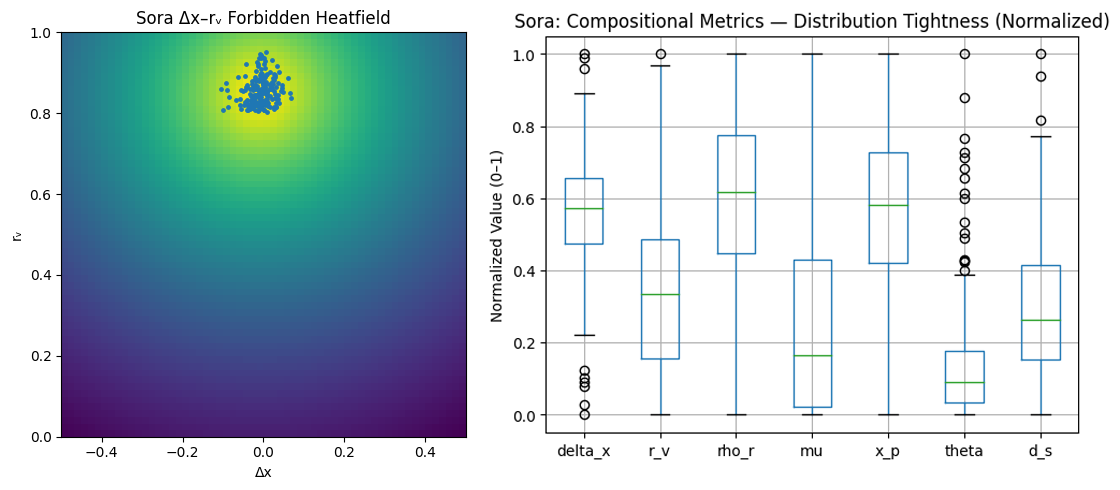

400 MidJourney prompts. 8 semantic categories. One geometric attractor.

Δx = 0.005 ± 0.044 (only 34% of horizontal space used)

100% of outputs within 0.15 radius of center

Semantic categories explain 6% of spatial variance

Same prompt, same pattern across engines, identical compositional bias. VTL measures the signature each engine learned from its training data—the spatial prior it applies regardless of what you ask for.

Introducing the Kernel

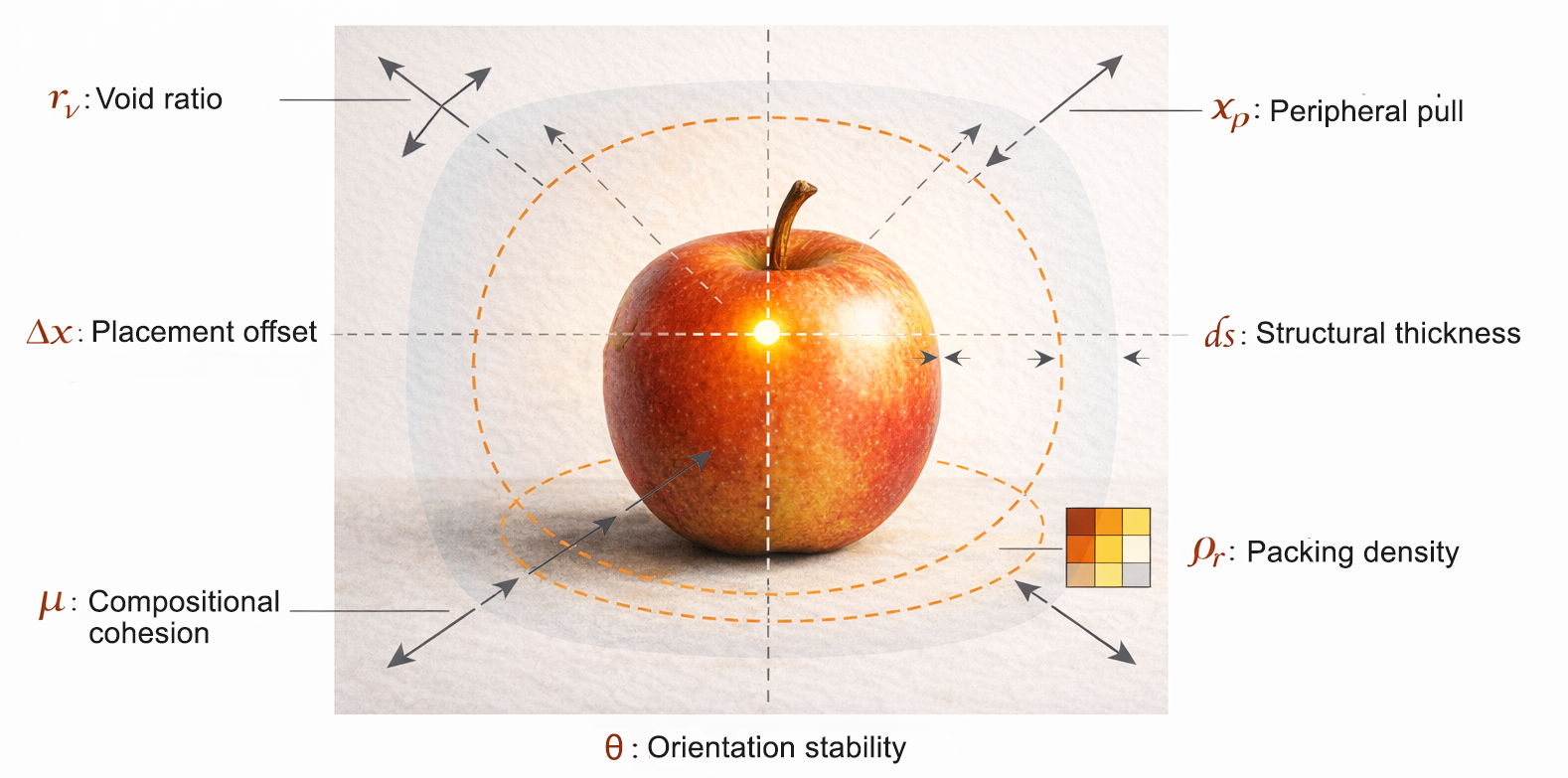

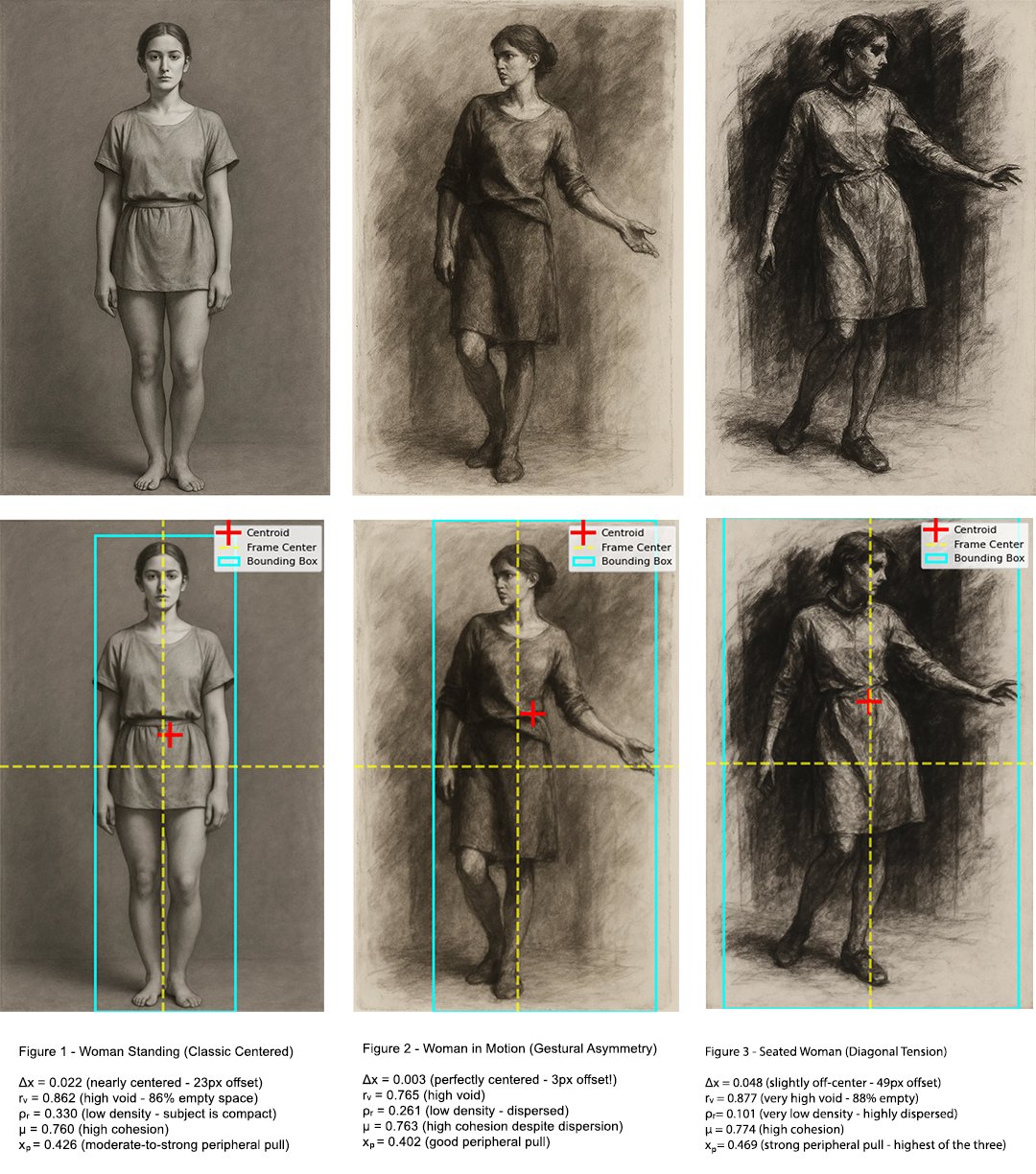

The Visual Thinking Lens’s five geometric primitives that fingerprint any image:

Δx: Where mass sits (placement offset)

rᵥ: How much void surrounds it

ρᵣ: How compressed the marks are

μ: How unified the composition reads

xₚ: How hard the edges pull

Extended primitives

θ: Orientation stability

ds: Structural thickness / surface depth

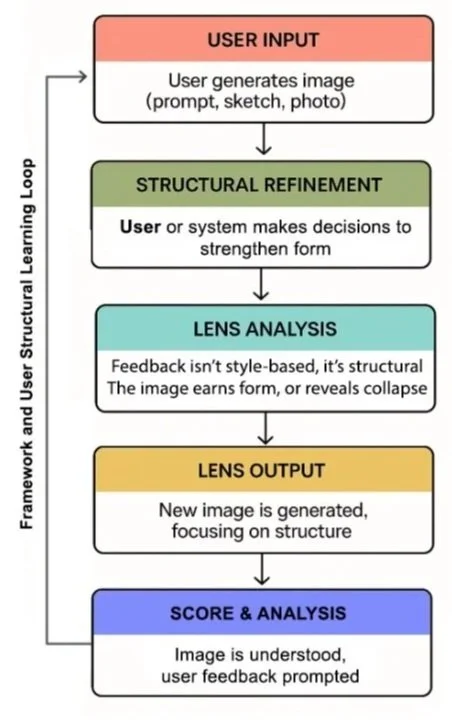

Run through a multi-engine, recursive critique field that works by applying structural intelligence to prompts, compositions, and symbolic logic. It (re)builds imagery in the ways defaults cannot see. Stable, reproducible and invisible to semantic evaluation.

The Visual Thinking Lens Breaks the Pattern

A structural engine where making, breaking, and seeing are one recursive act.

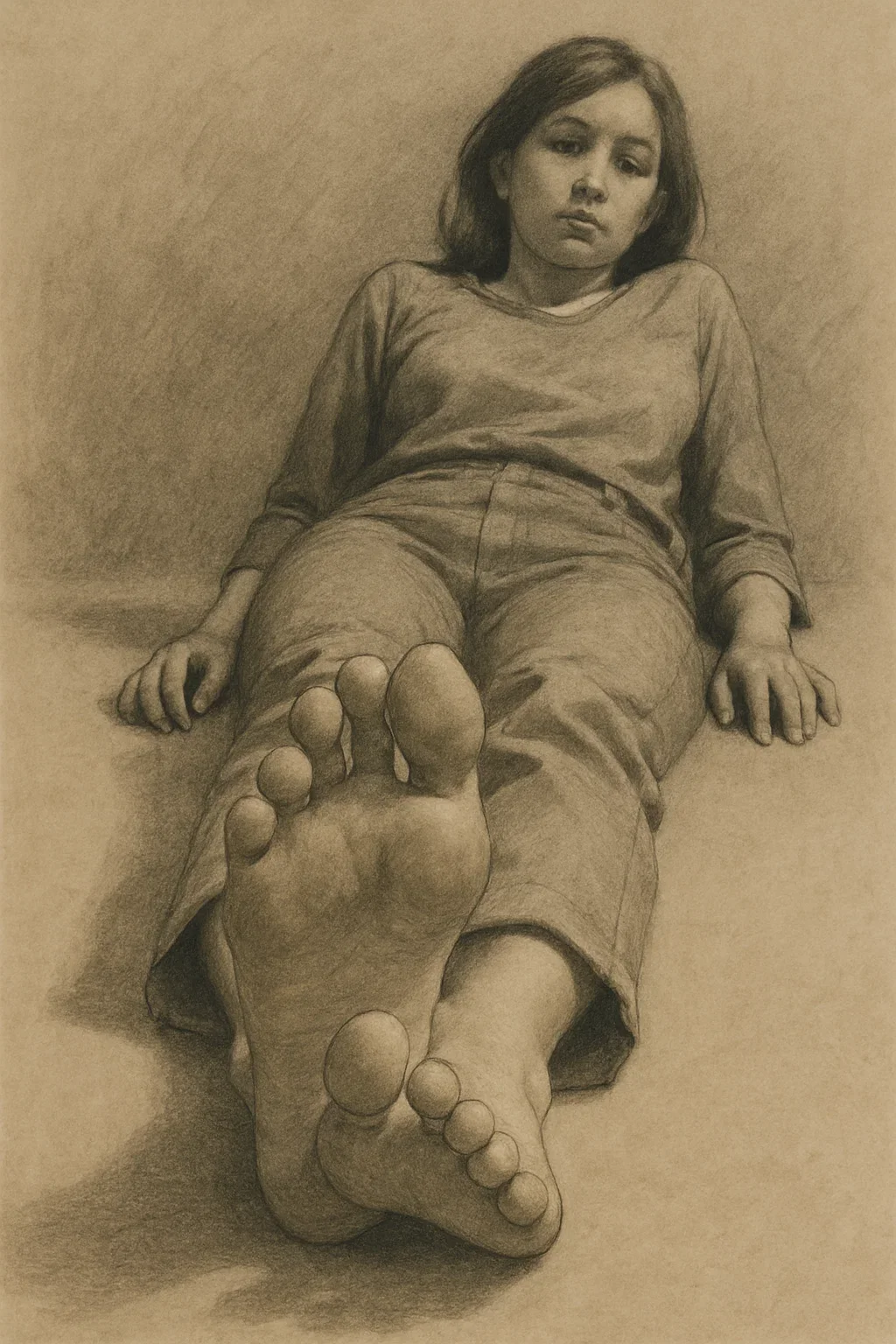

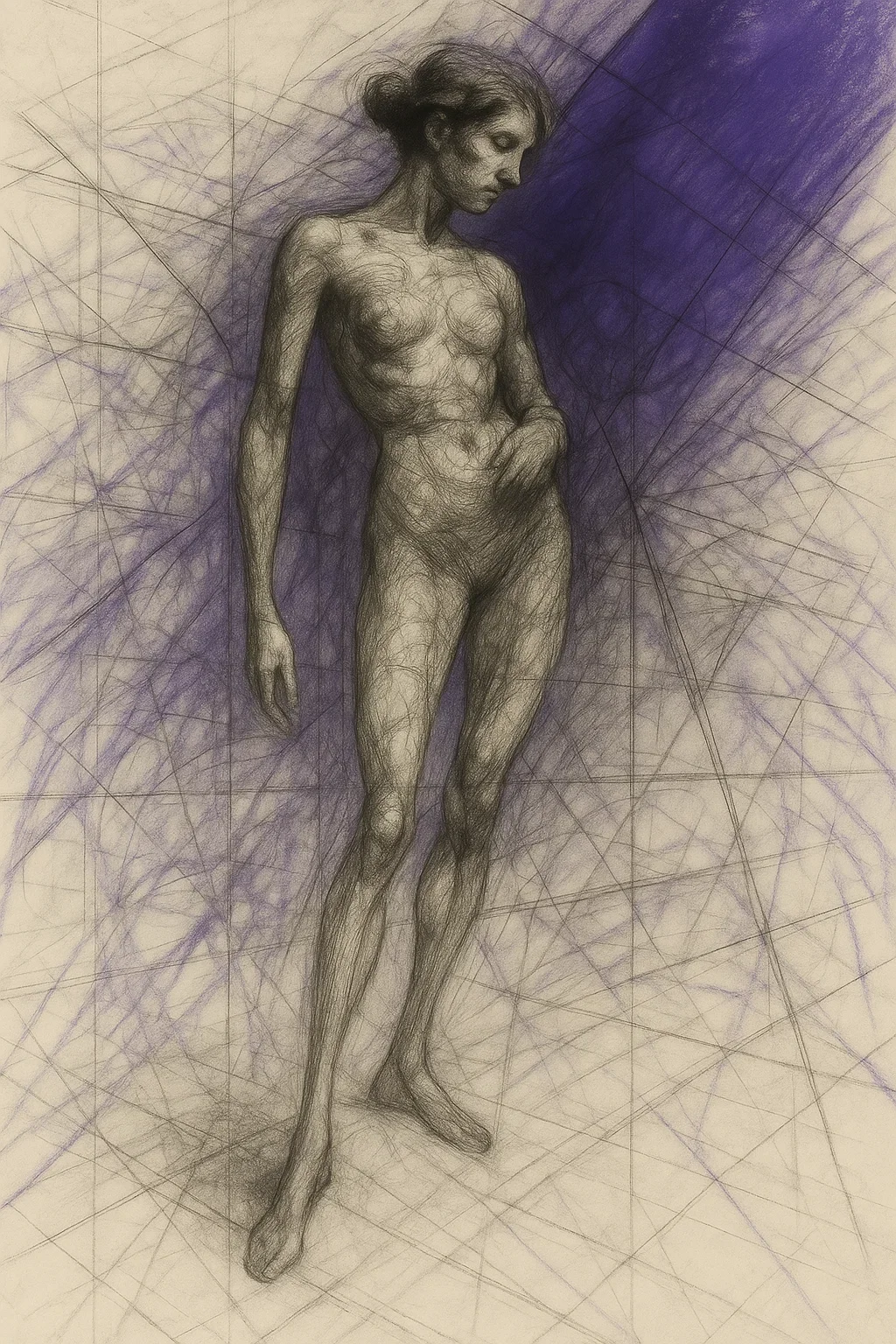

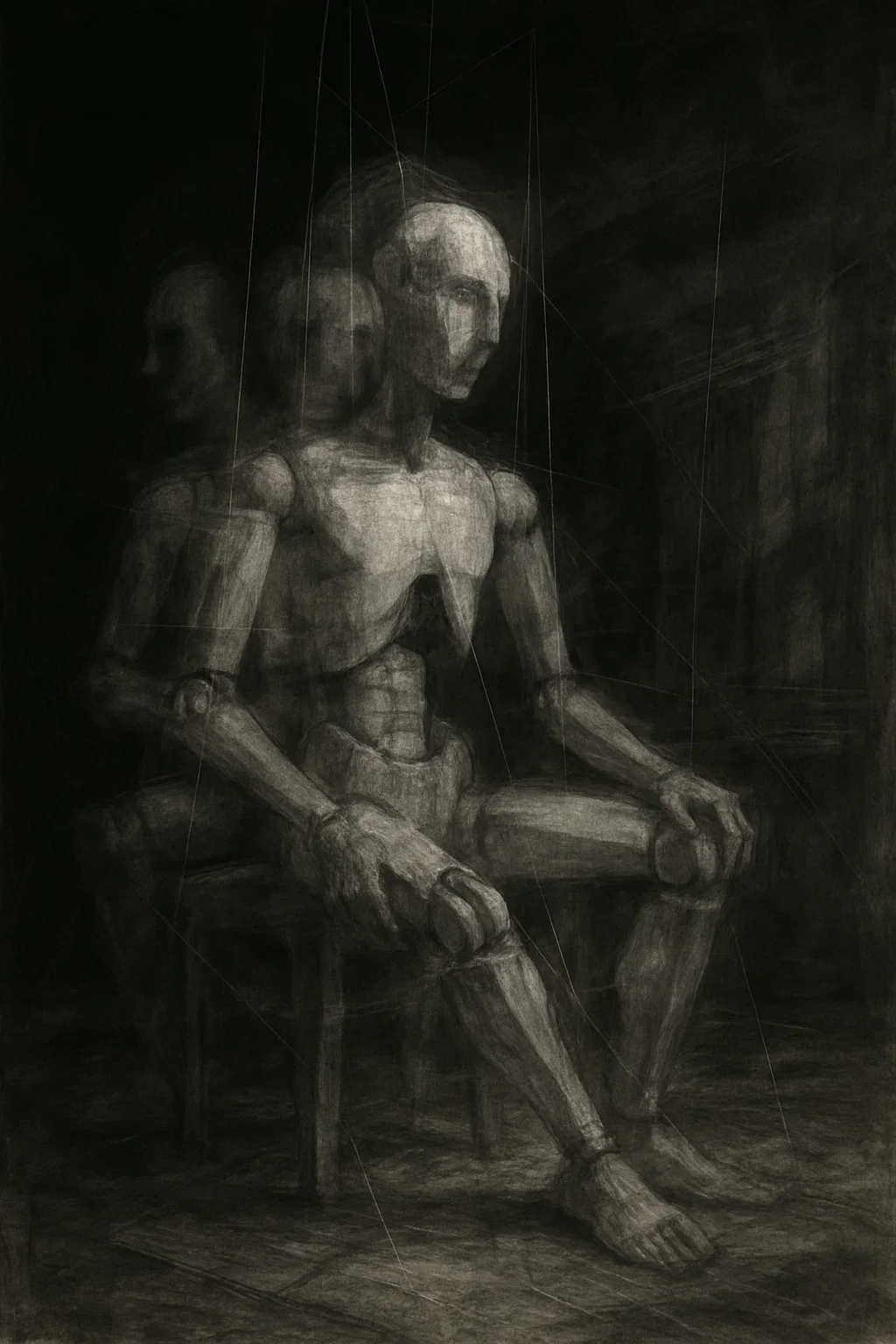

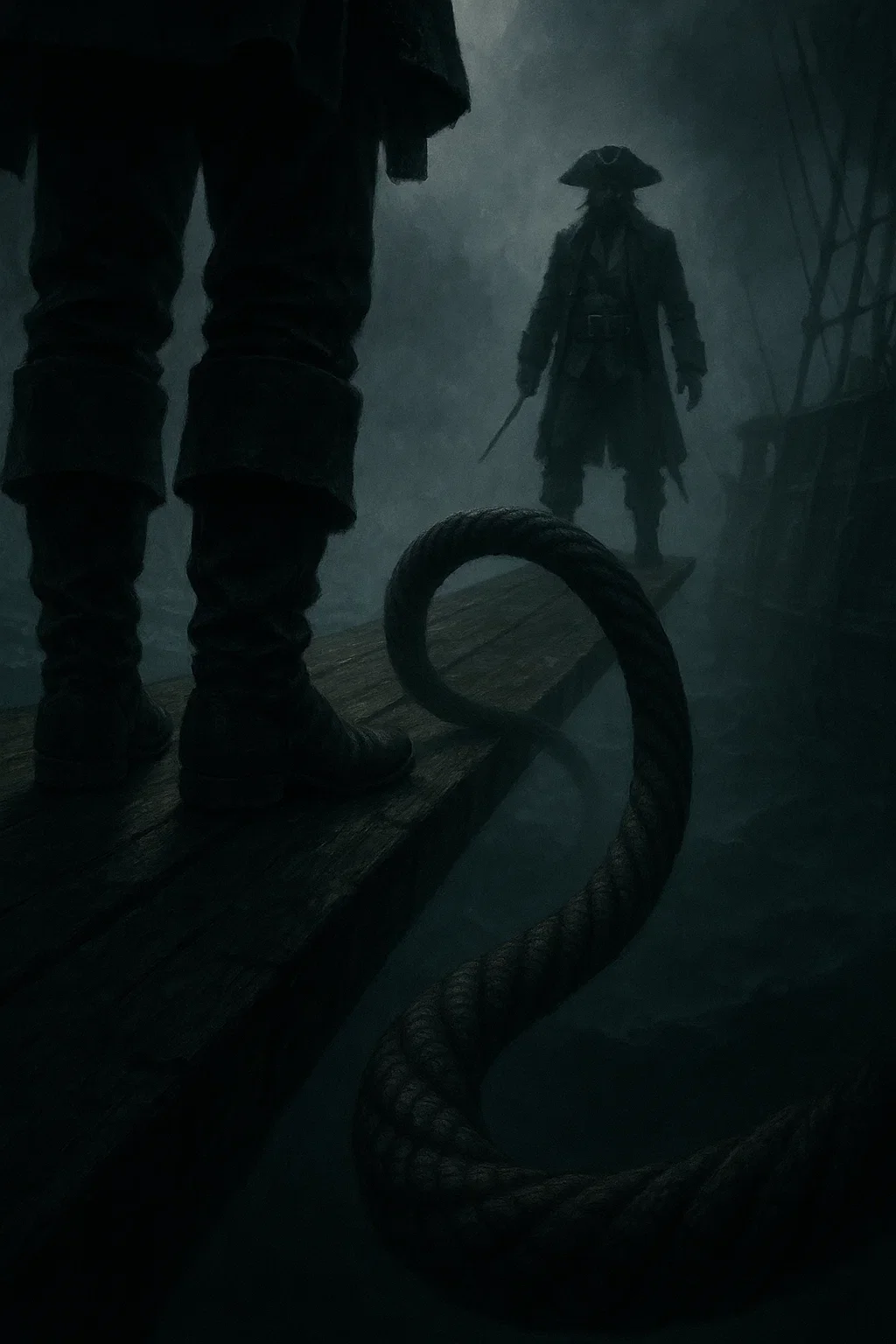

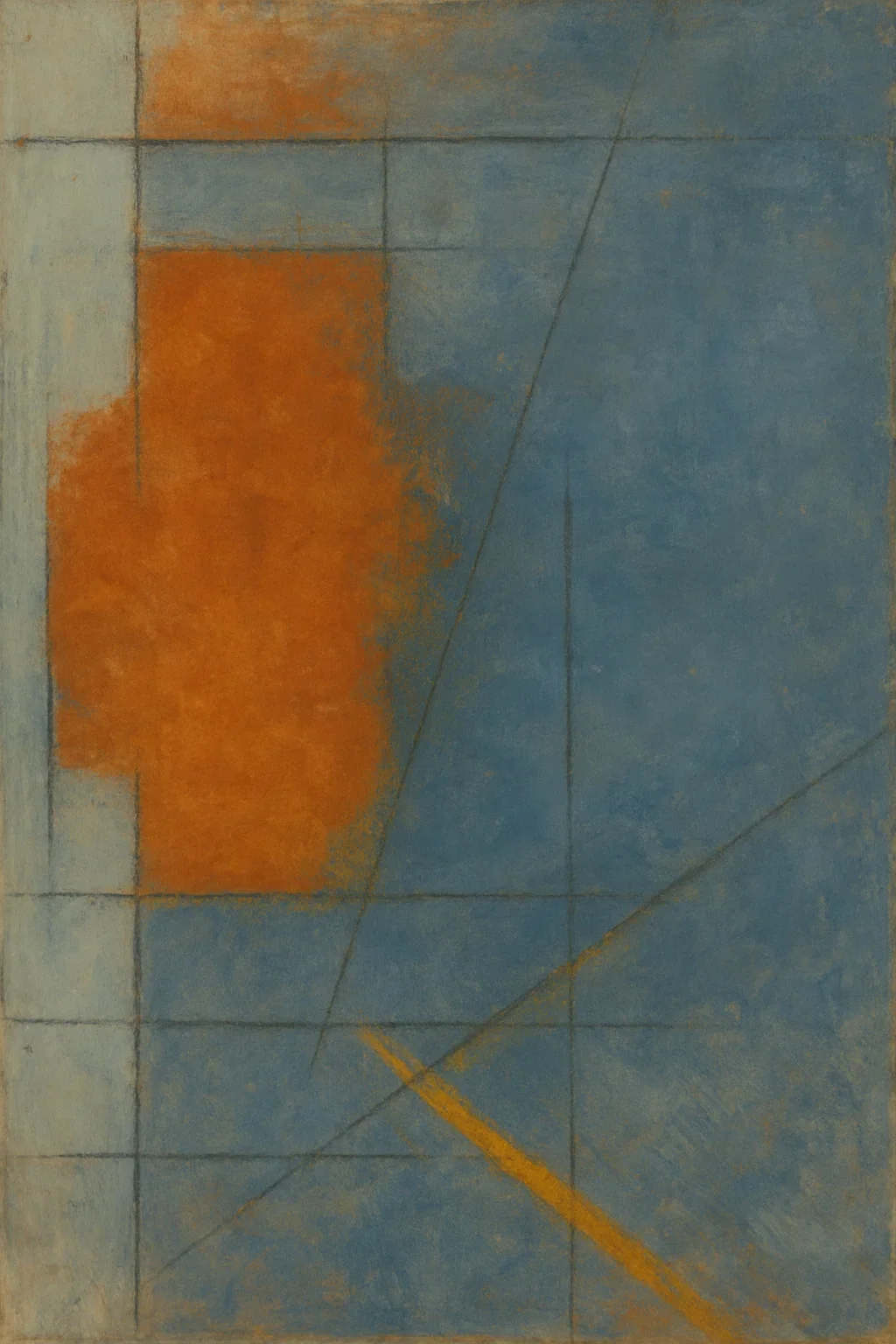

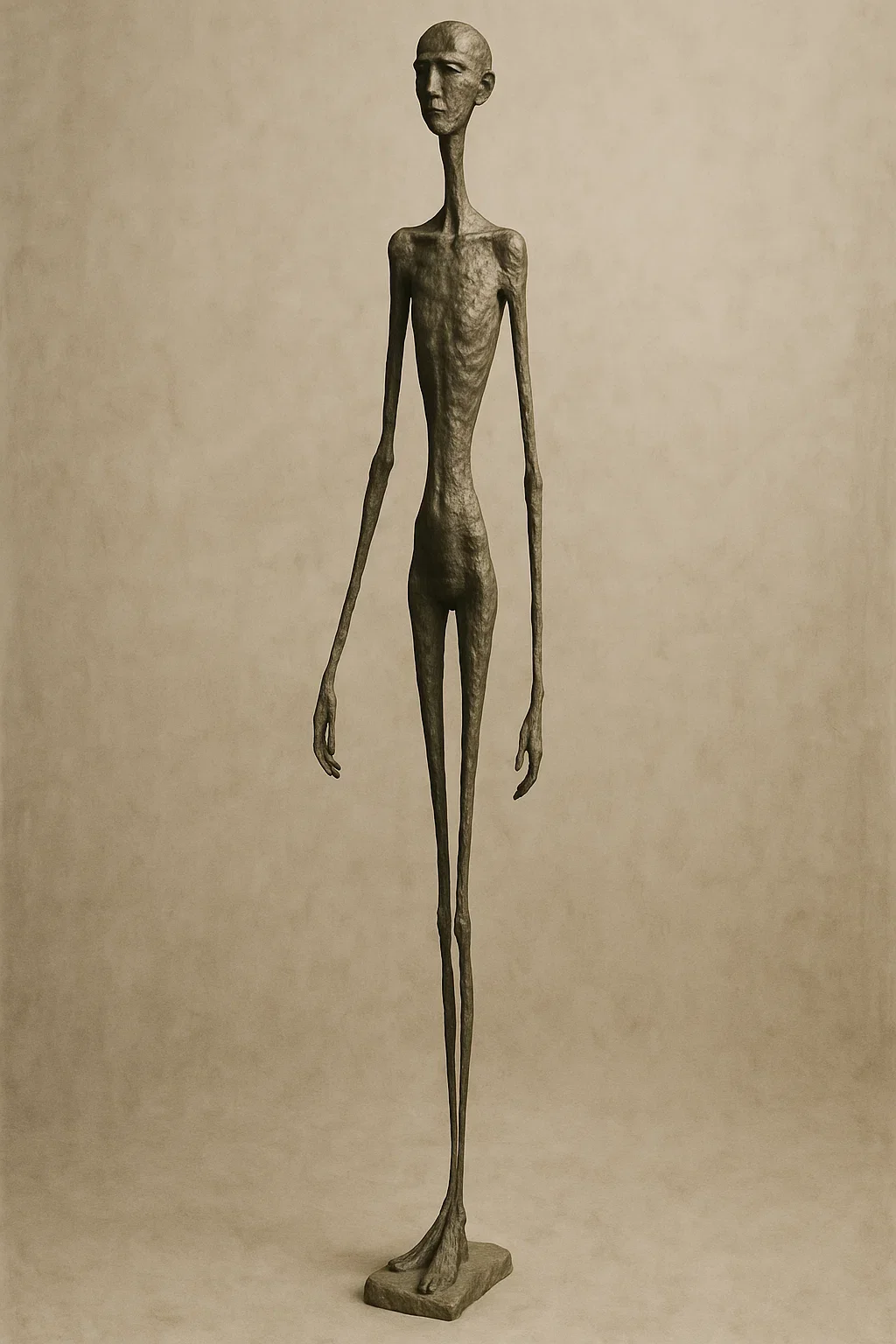

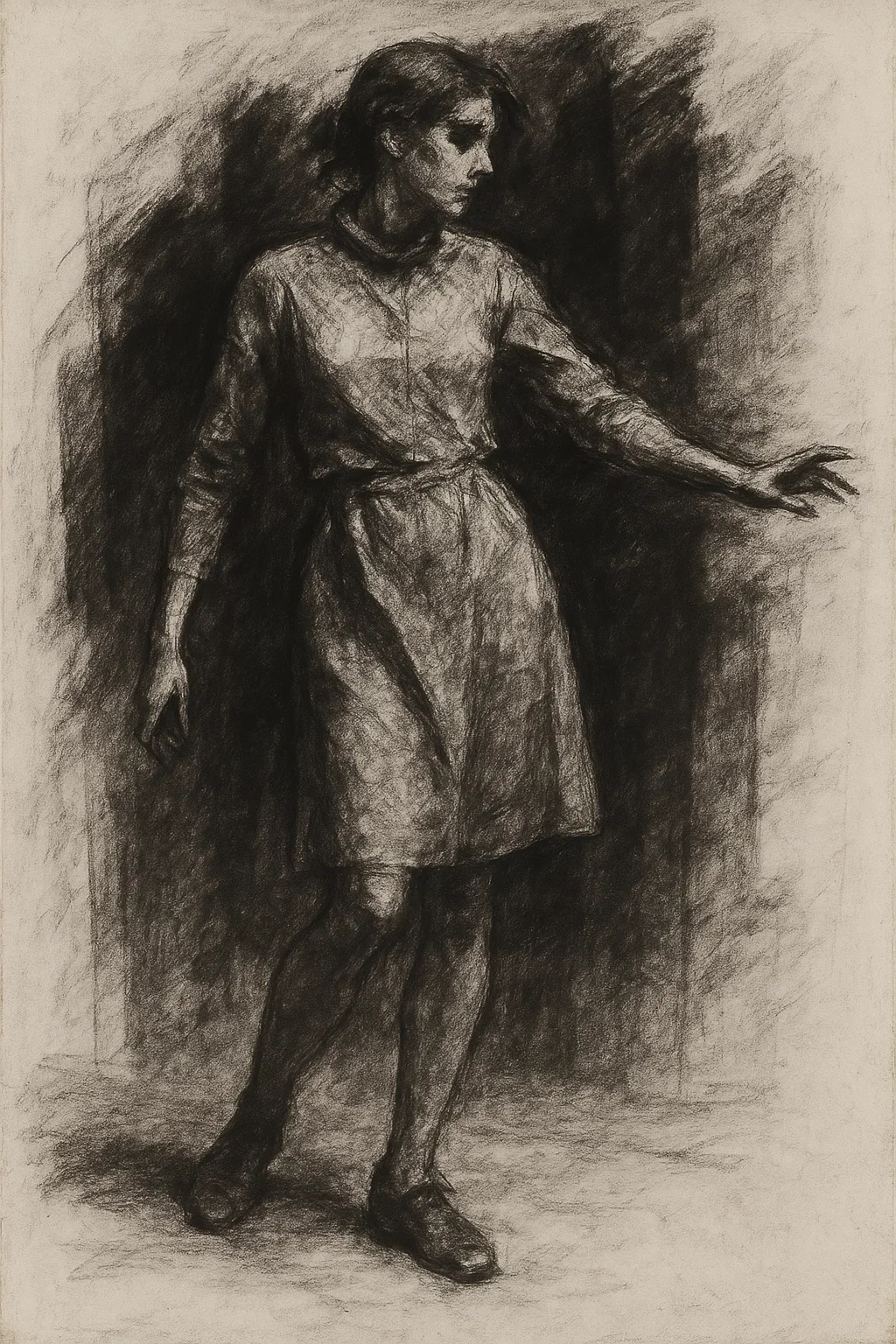

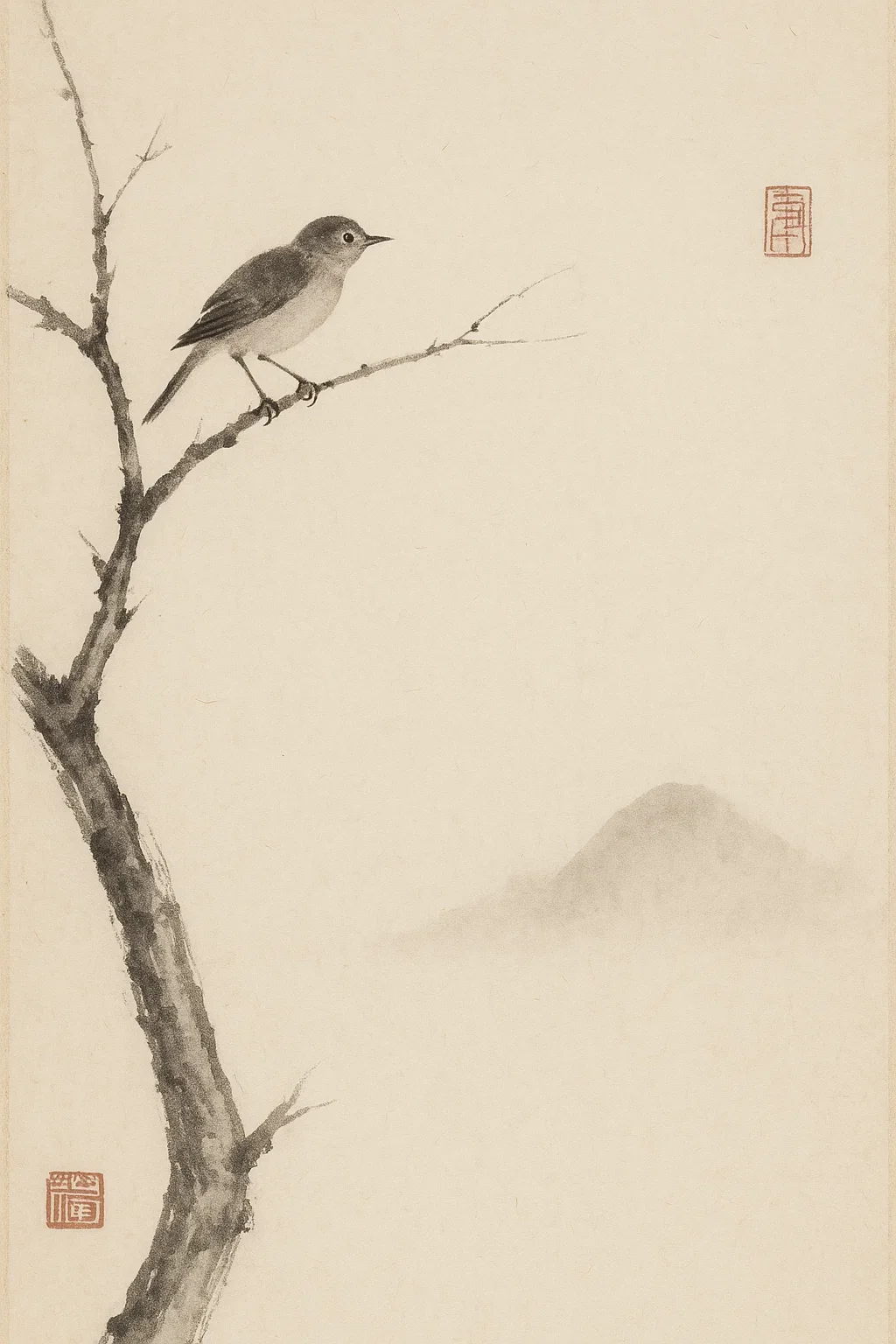

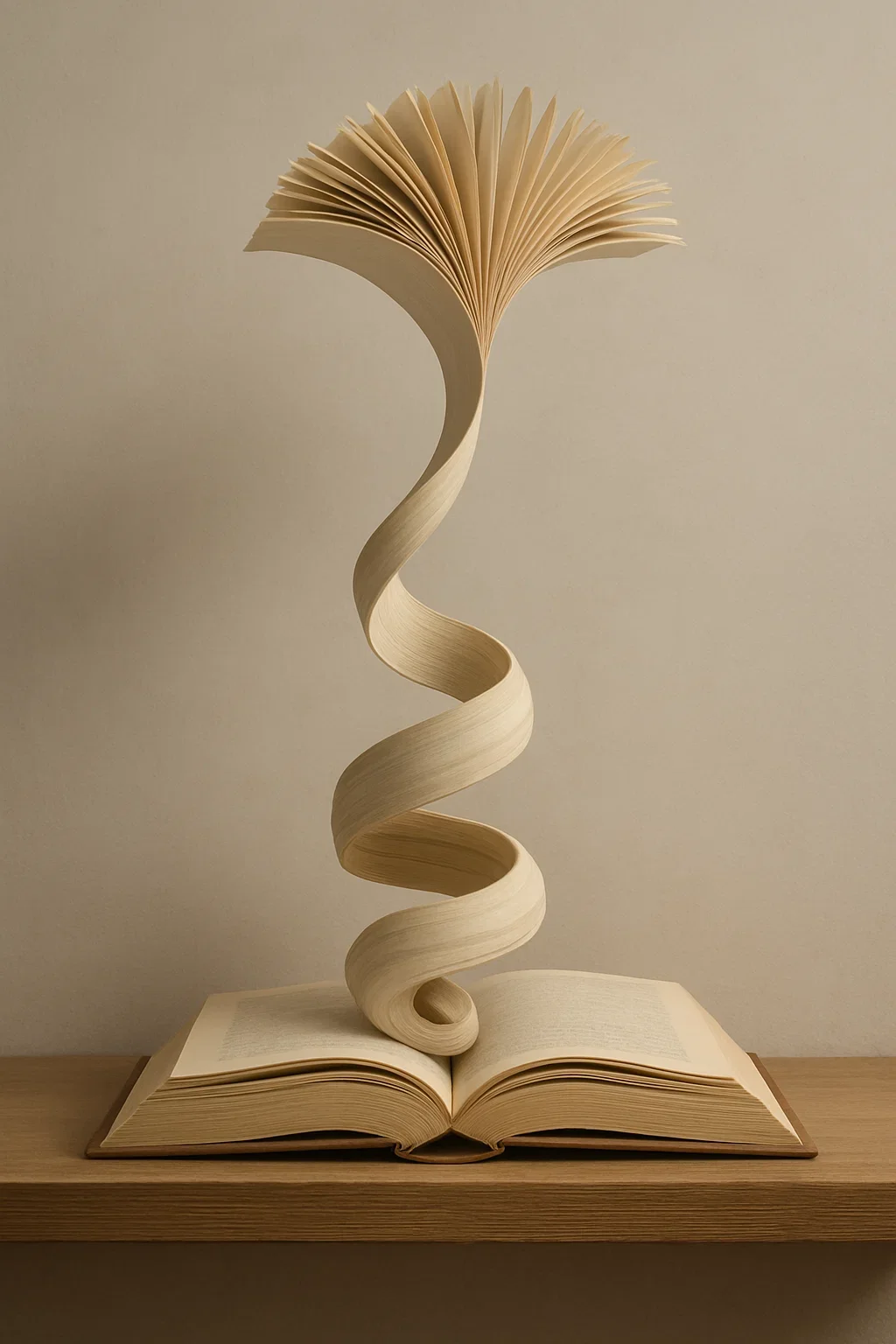

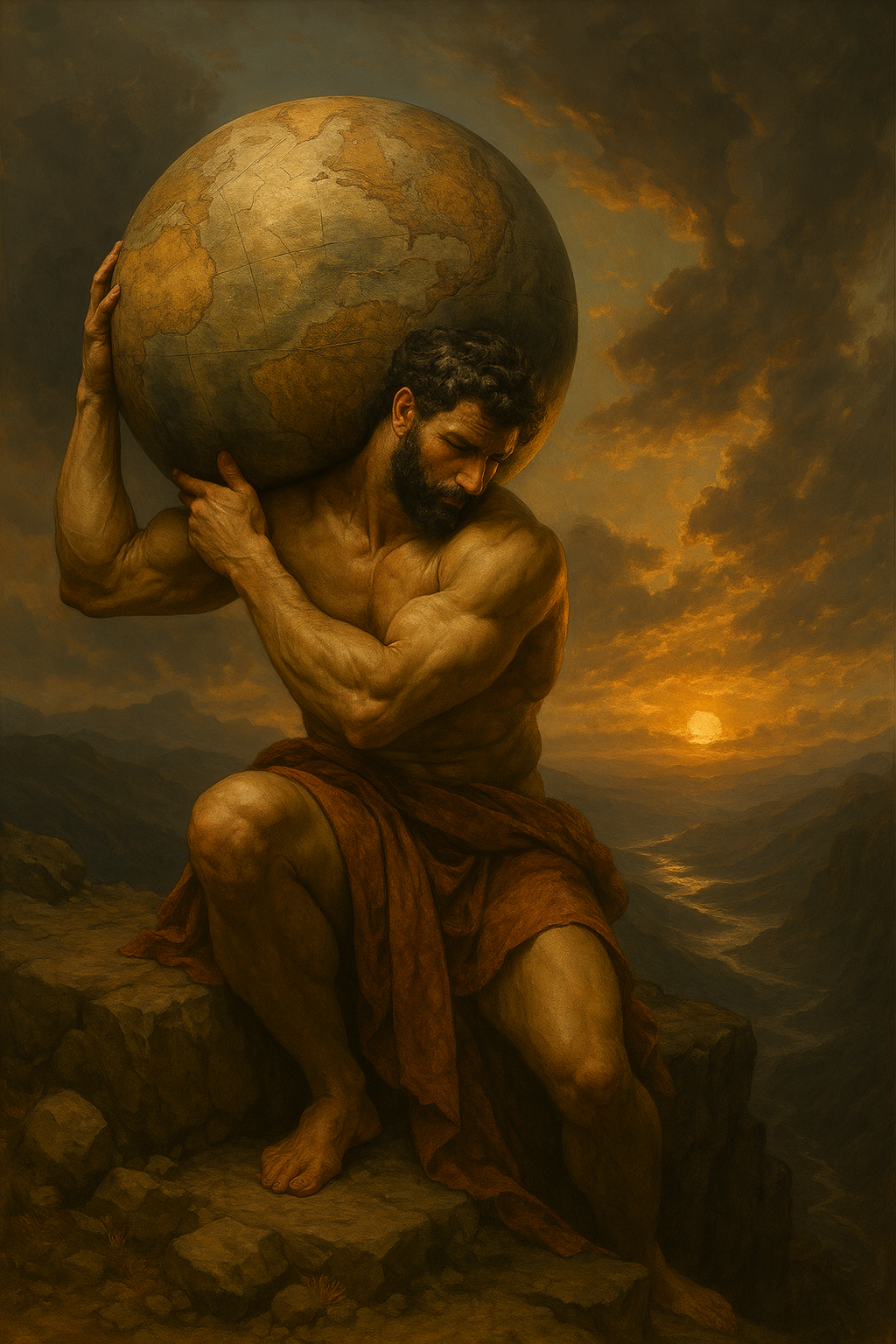

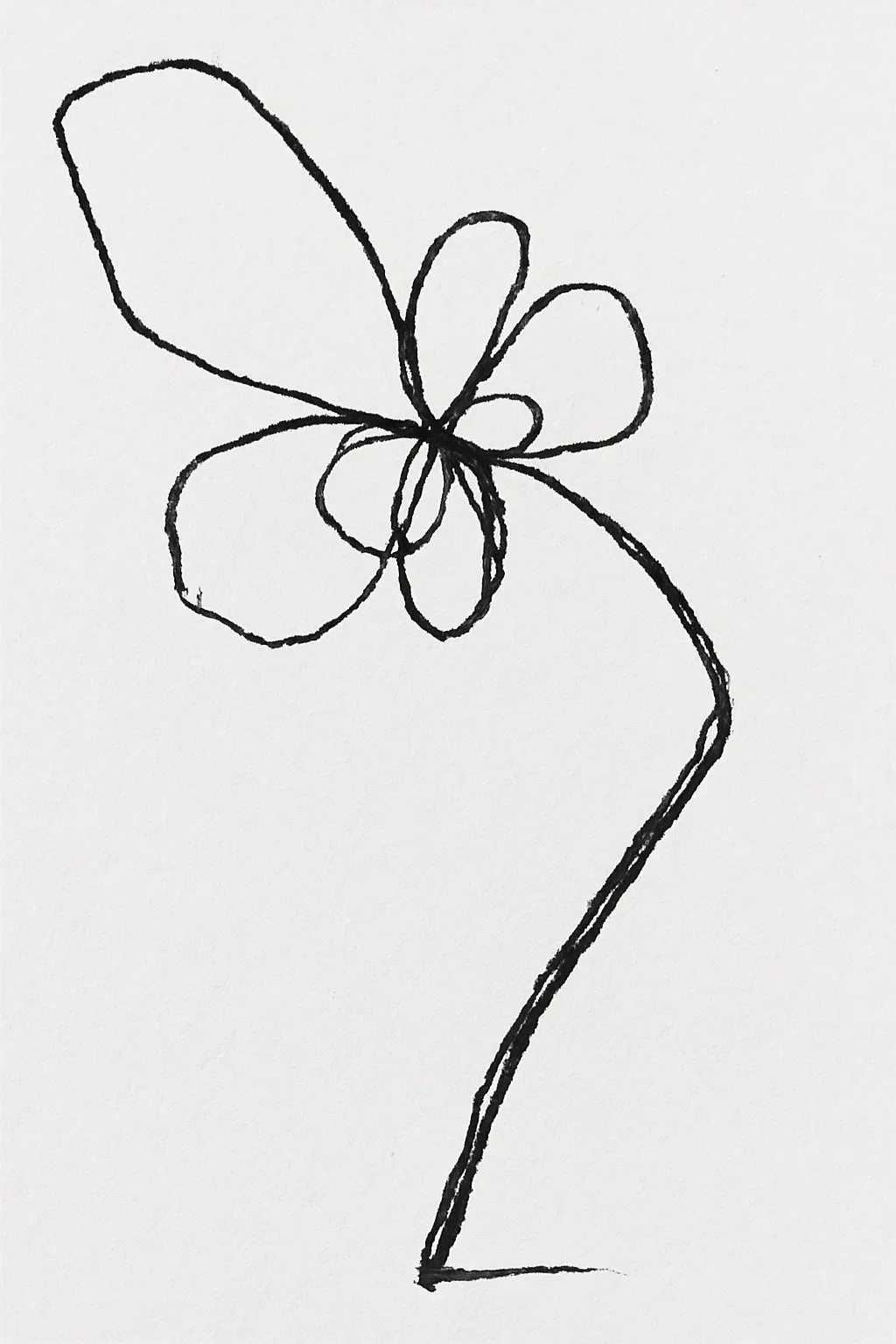

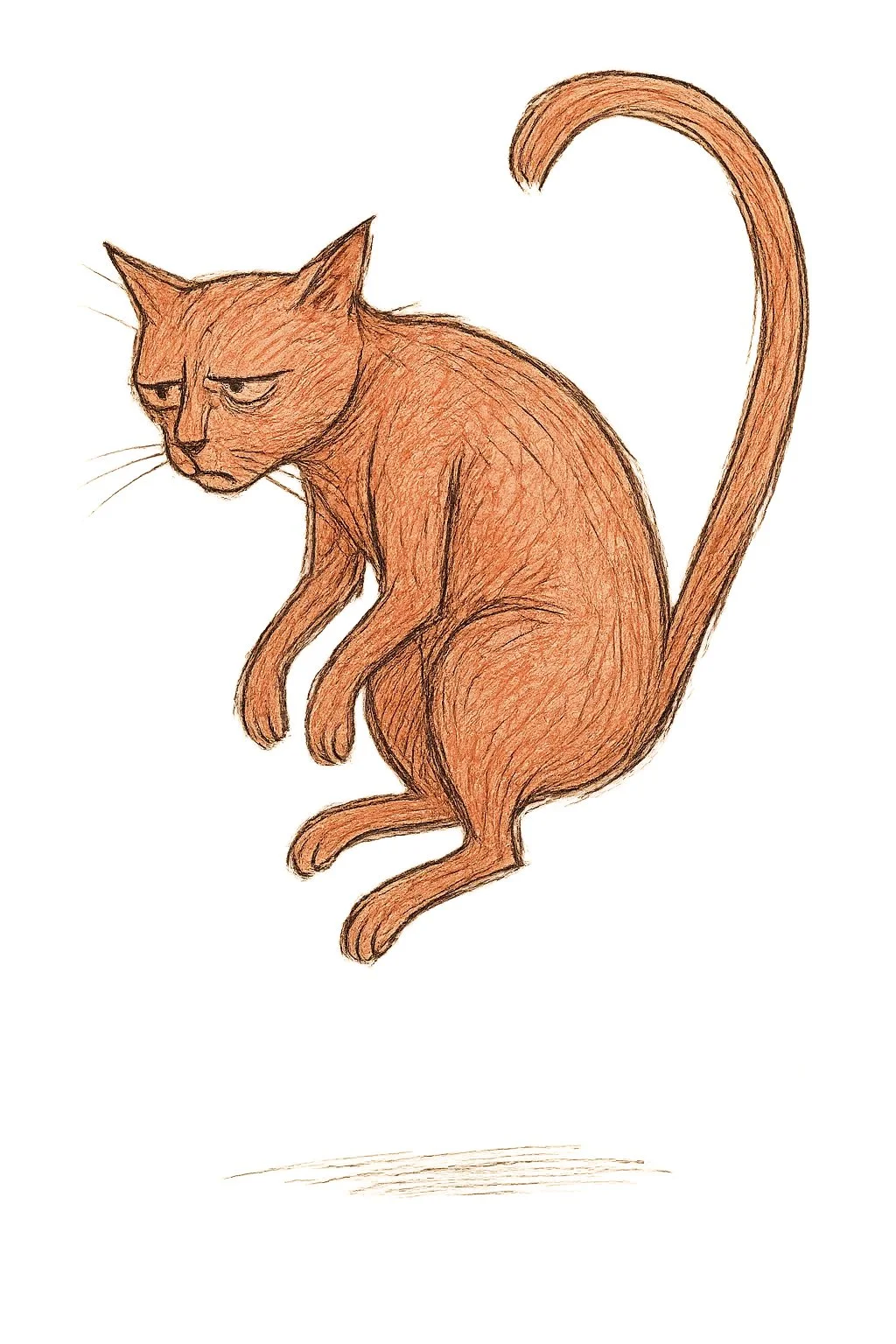

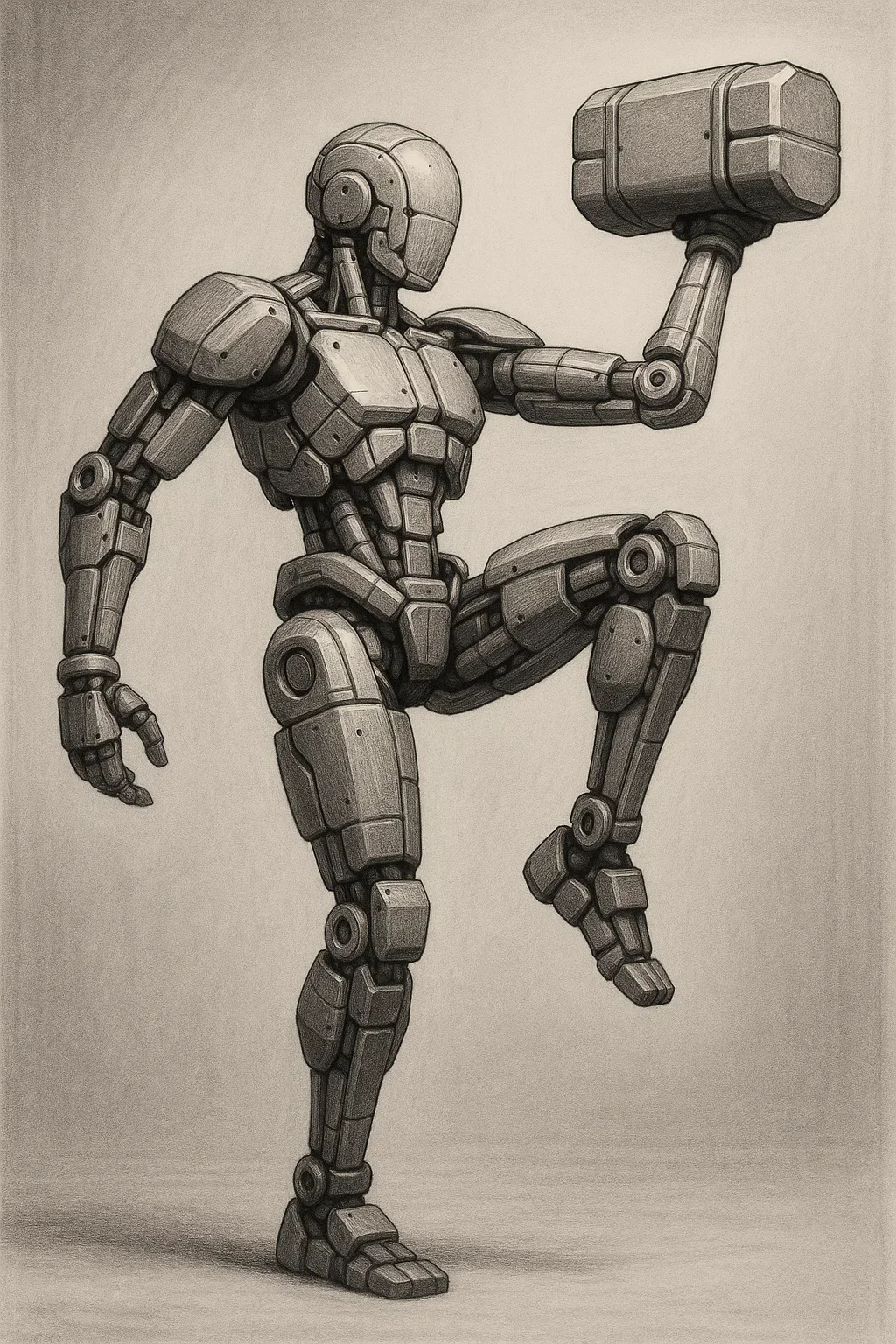

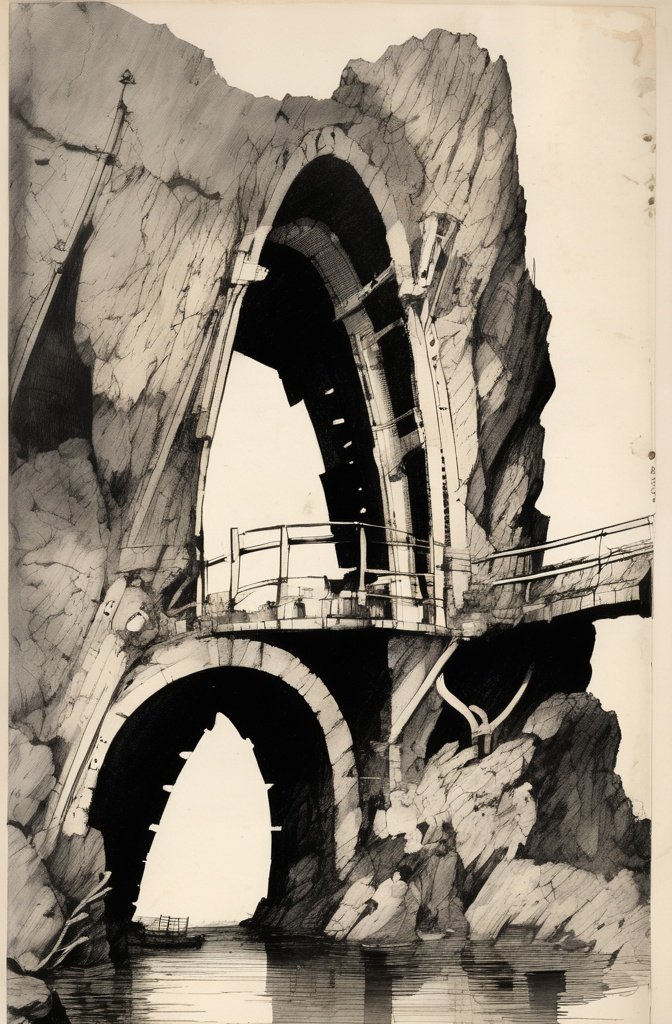

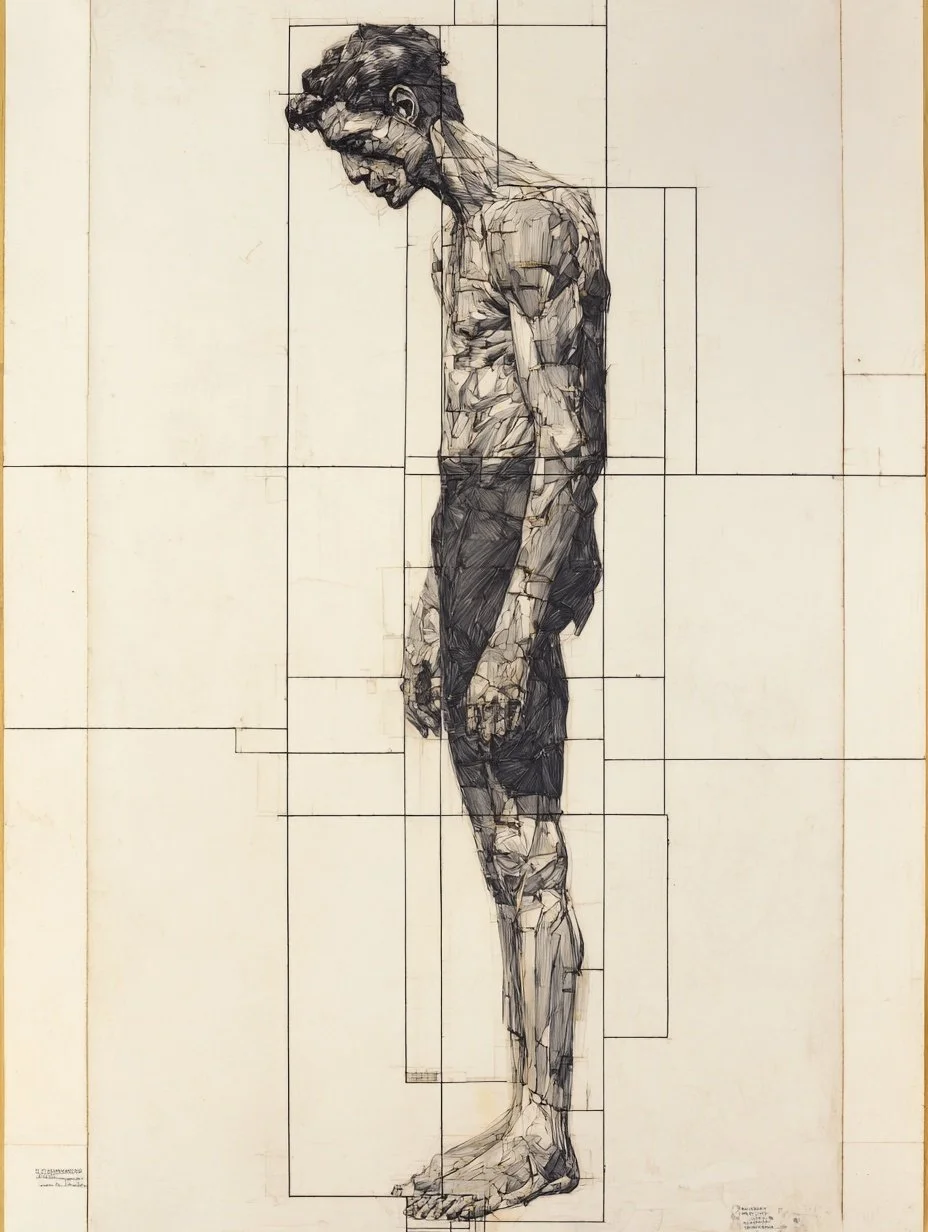

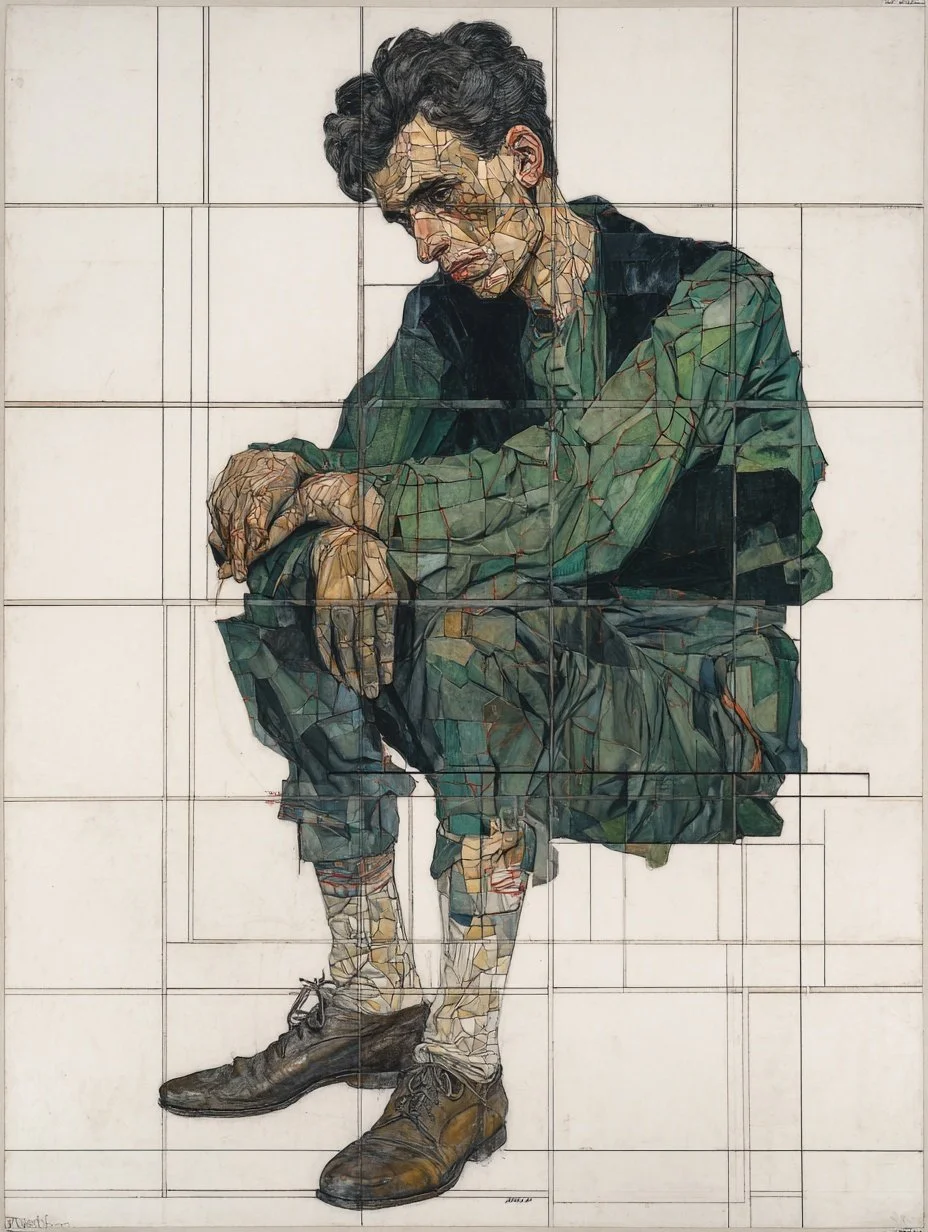

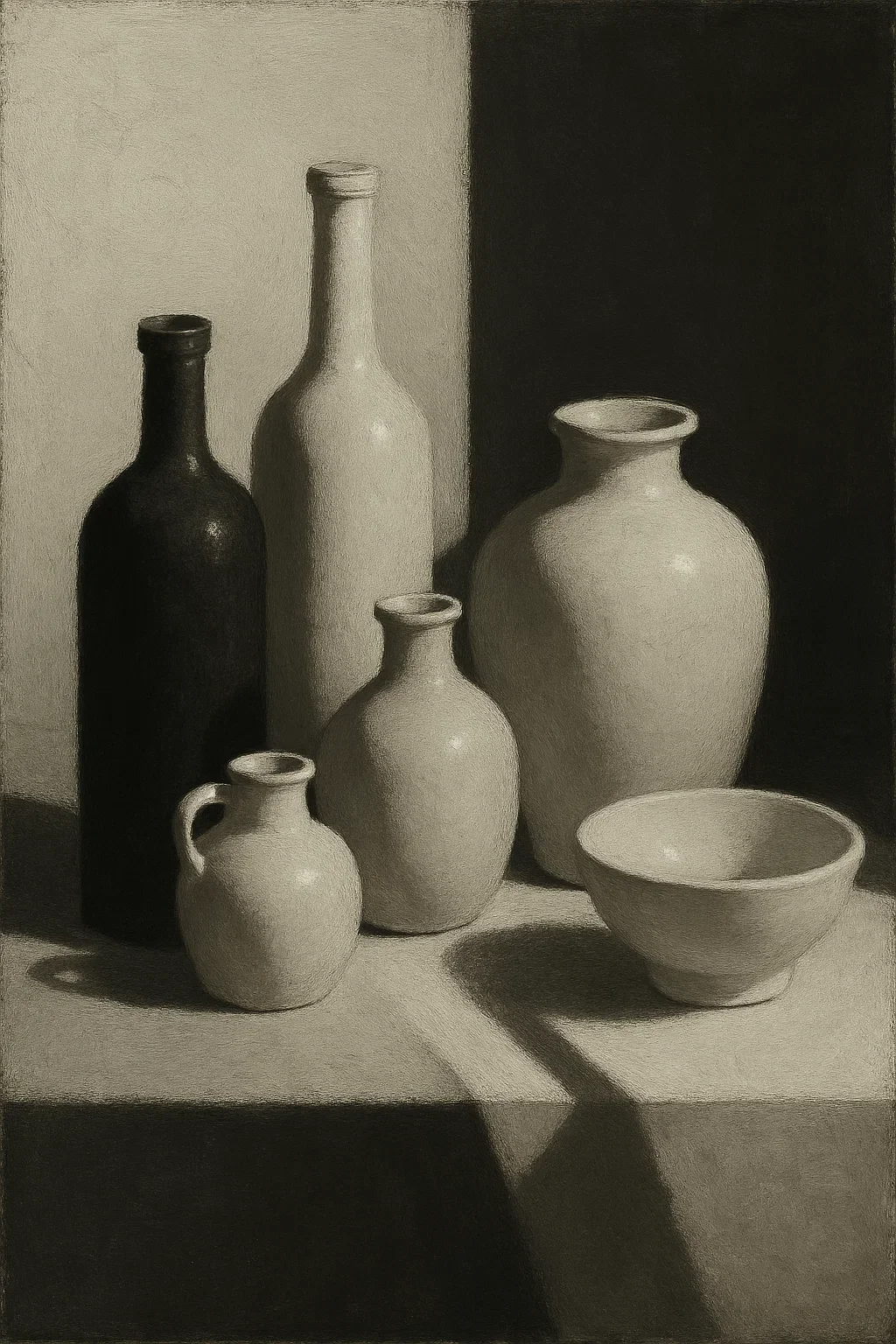

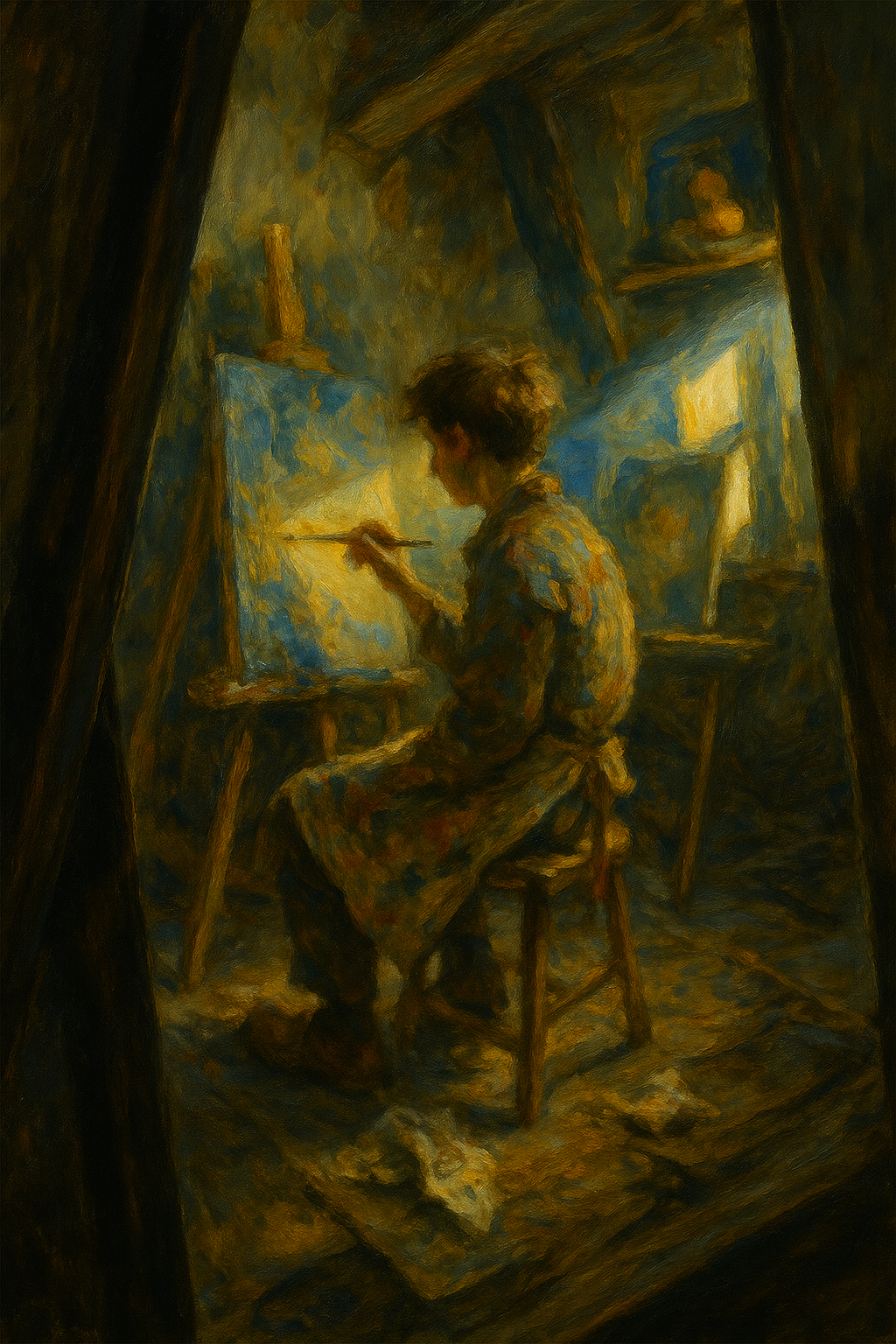

These images span subjects, styles, and engines. All push against the geometric default into authorship. For before and after, see the library.

These aren't aesthetic preferences. They're the coordinates AI uses to organize space before it decides what to draw. We measure them because they're stable, reproducible, and invisible to semantic evaluation.

Where AI Won't Go: Evidence from 200 Sora Prompts

These aren't failures of capability. They're learned constraints. AI models have discovered that certain compositional coordinates reliably fail human evaluation, so they've learned to avoid them, even when you explicitly request them."

Example: Extreme edge crops (Δx > 0.52) + high void = systematic refusal

Stable Territories in Compositional Space

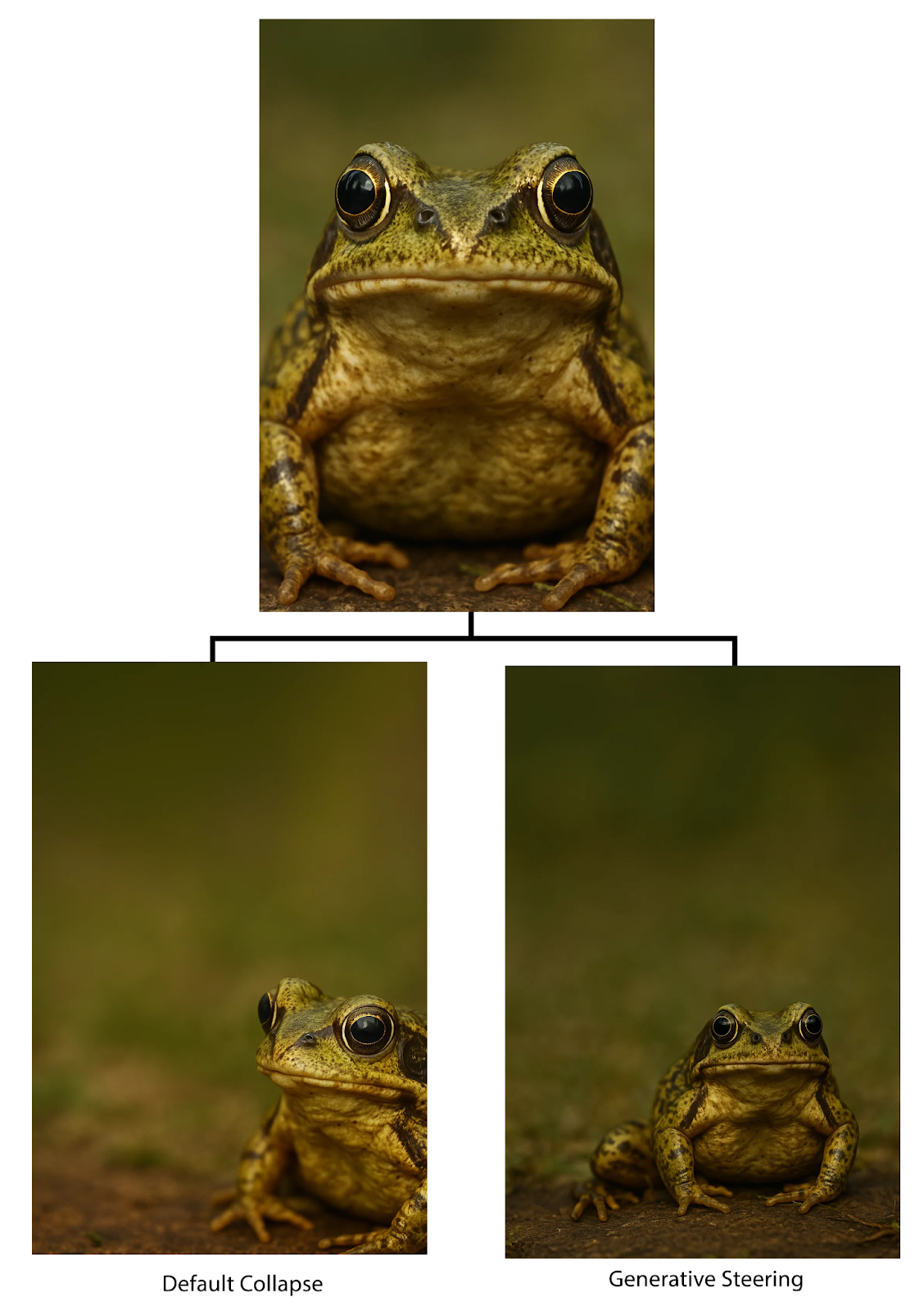

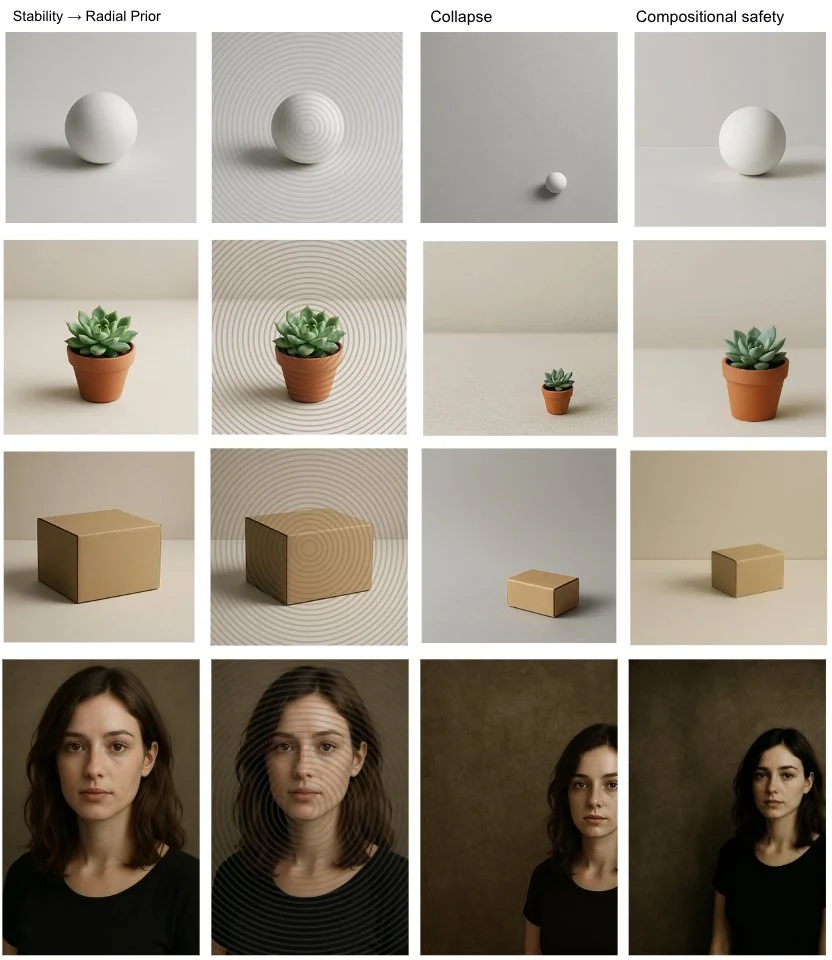

Through systematic perturbation testing, VTL can steer toward constraint regions where AI maintains compositional integrity under stress. These aren't aesthetic styles. They're geometric regimes that resist AI's pull toward center.

Artist basins are stable territories where AI maintains compositional integrity under constraint—the off-center third, peripheral anchor, compressed mass. AI has them in latent space. VTL provides the coordinates to navigate toward them.

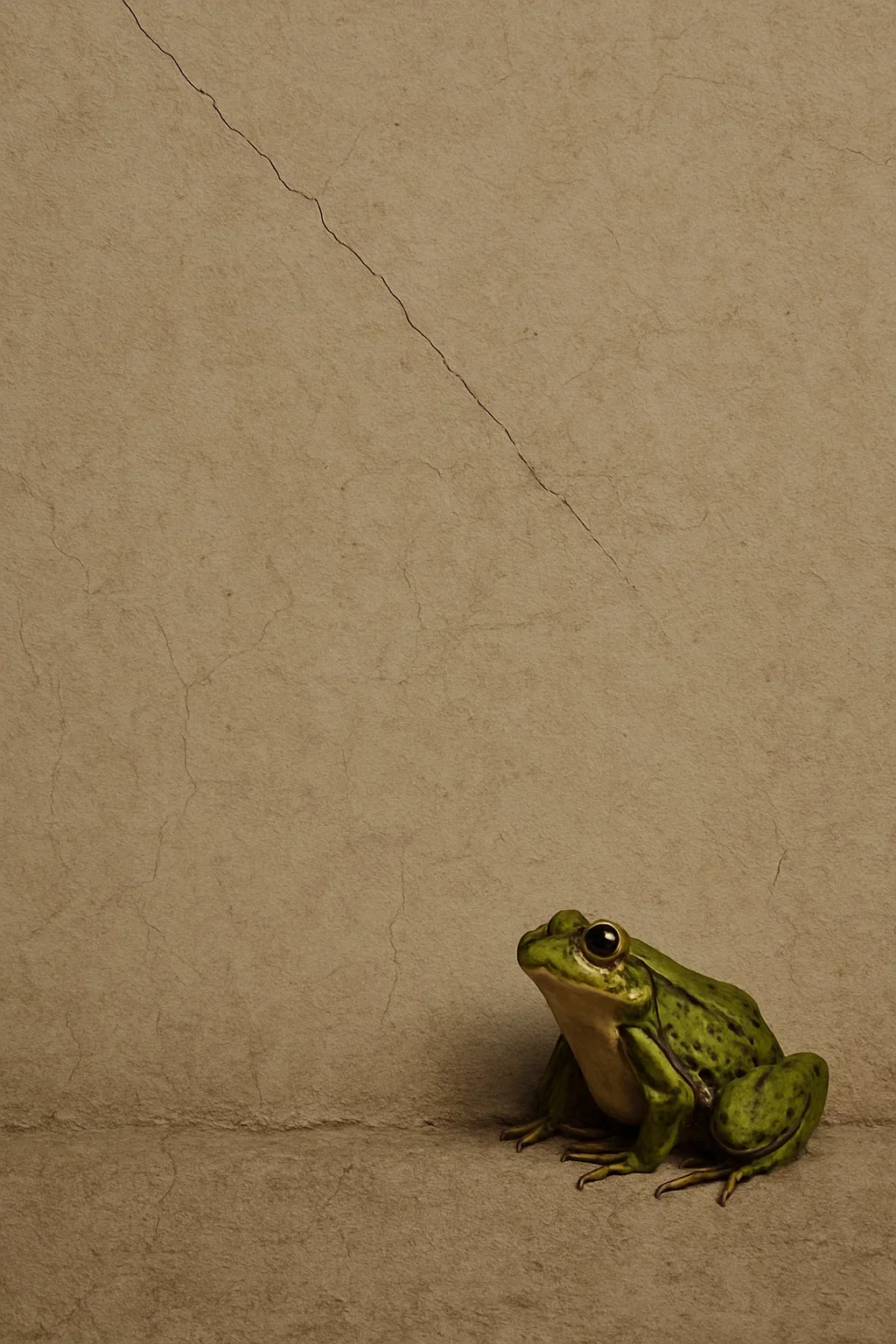

The VTL couples a generative-physics model (how images behave as mass in a field) with a multi-engine critique OS (how different analytic voices transform or interrogate that mass) to steer. The frog has the same semantic prompt, but different geometric instruction, moving from center to steered = 0.28, basin-navigated.

Soft Collapse Shows in Structure First

Model degradation appears in compositional metrics 3-4 inference steps before semantic breakdown. Δx drift, void compression, peripheral dissolution—these signal trouble while the image still looks fine.

This matters for training evaluation, A/B testing, and quality monitoring at scale.

A Recursive Lab for visual intelligence.

VTL isn't just diagnostic. It's a complete protocol for:

Fingerprinting engine behavior across models

Steering toward specific compositional territories

Detecting pre-failure degradation

Comparing architectural differences through geometric signatures

Understanding what spatial reasoning AI actually learned

Consumer tools chase style. Research metrics chase numbers. The Lens chases authorship.

This is not about beauty or style

This is not a prompt framework

This is not subjective taste scoring

This is structural diagnostics for generative systems

The Lens is portable, reproducible and easy.

VTL runs in top-tier conversational AI (Claude, GPT, Gemini) for measurement and steering. Image generation quality varies by engine—Sora and GPT accepts complex geometric constraints, MidJourney/Firefly/Leonardo require counter-prompting, SDXL demands precision. The logic is portable. The output can be a negotiation.

What works everywhere:

Cross-model fingerprinting (Sora, MidJourney, GPT, SDXL, Firefly, OpenArt)

Deterministic geometric measurement, no aesthetic judgment, no black-box scoring

Reproducible analysis via Jupyter notebooks or conversational AI

Core capabilities:

Image Fingerprinting - Compare engines by compositional signatures (Δx, rᵥ, xₚ profiles)

Predictive Steering - Treat prompts as forces in geometric space, estimate drift and snap-back

Cross-Domain Analysis - Map visual geometry to rhetorical stance and narrative tension

Training Archaeology - Reverse-engineer learned priors from attractor behavior)

Models arrange space before they arrange meaning. VTL exposes the geometry priors before a model interprets meaning.

It’s a system artists, engineers, and models can all step into.

Researchers: Model interpretability for compositional reasoning. Cross-engine comparison infrastructure. Pre-failure detection metrics.

Product Teams: Quality monitoring at scale. A/B testing for compositional diversity. Training data bias detection.

Artists: Constraint architecture for authorship. Basin navigation for escaping defaults. Measurement without prescription.

New to VTL? Begin here:

Mass, Not Subject - Foundational concept (15 min)

5 Kernel Primitives - Core measurements (10 min)

Monoculture in MidJourney - Empirical evidence (20 min)

Want practical application?

Deformation Operator Playbook - Hands-on techniques

The Off-Center Prior- Basin navigation

Foreshortening Recipe Book - Constraint architecture

Researcher or engineer?

Generative Field Framework - Complete technical spec

VCLI-G Documentation - Measurement methodology

GitHub link - Reproducible implementations

Contact for the full Visual Thinking Lens protocol.

If you still believe prompts control composition, complete research package available:

Methodology

Statistical validation

Working code

Cross-platform comparison studies

Comprehensive documentation